Custom code is in every BI environment. But where exactly?

Over the past two years, we’ve spent a tremendous amount of time explaining the existence and risks of the hand-coded parts of business intelligence environments. We’ve covered pretty much everything – labor savings, easy impact analyses, fast automatic documentation, and all of the others.

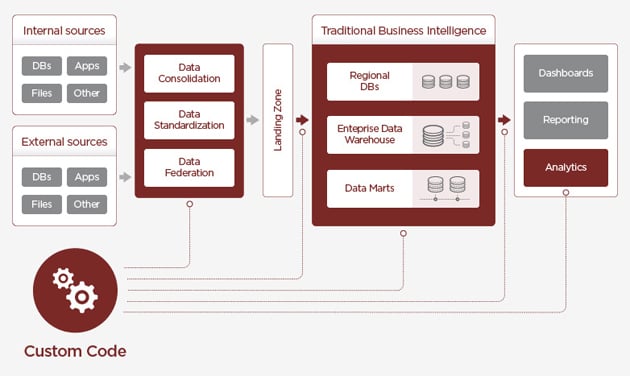

But there was something missing – a fine graphical representation. We made one for you. Yeah, I know, every representation is somehow simplified. But for us it is still useful as a blueprint for discussions with our customers and prospects. So, where can you usually find custom code in BI?

(Click the image above to expand)

1) Data Consolidation. The first table and first arrow represent the data consolidation process. The goal here is to drag data from all available systems, transform it, and ensure certain data quality. This part is mostly done by hand-coded tools (a mix of a simple scheduler and a lot of SQL scripts which typically consist of stored procedures that are sometimes partially automatically generated, a mix quite common in smaller organizations) or by ETL tools (mostly in bigger enterprises). The problem is that using ETL tools does not ensure an absence of messy custom code (think about, for example, more complex business logic which is hard to implement using ETL tools or the SQL overrides so often used in Informatica PowerCenter and other similar tools) – it is present in every environment there is.

2) Traditional DWH Area. The fourth table represents a total summary of the “traditional DWH area” – usually meaning an operational (sometimes even real-time) data store, enterprise data warehouse, and other data marts and databases around it. Simple tasks in these environments are usually solved by dumb-proof vendor tools, but when the system hits a certain level of complexity, custom code comes back into play. Especially in connection with preparation of data for business oriented data marts (marketing, controlling, etc.).

3) Reporting. This kind of app uses data from the previous step, usually very well prepared in various data marts. But we do not live in an era of predefined reports and dashboards anymore. Today everyone wants to play with data – simply said, self-service BI is here. What does that mean? The number of reports and analyses is growing rapidly, and sometimes data prepared in our data mart is simply not enough to get our numbers. So we need to transform the data a little bit more, and voila! We typically use the most widespread transformation and data preparation language – SQL (or something very similar). Today the reports themselves are prepared using nice looking UI and wizards, but for various (usually performance) reasons, there are often a lot of SQL overrides present.

4) Analytics. And analytics… this is a completely new and “full-of-custom-code” story. Over the last year the Big Data and Data Science boom has changed everything we know about data management. And it is just the beginning! Enterprises are slowly drowning in data swamps (sorry data lakes). PhDs and MScs are playing with data, creating smart algorithms for predictions or segmentations, and literally everything is hand-coded using a variety of languages starting with R or Python and ending with good old SQL (or its Hadoop variations like HiveQL or Pig).

There is no BI without chaotic and messy custom code. Gaining a full understanding of hard-coded script is necessary not only because you need to know what is happening inside, but also to be sure it’s all working like a Swiss timepiece.

Any thoughts or comments? Please, let us know in the form on the right or via email at manta@mantatools.com. Also, do not forget to follow us on Twitter!